From LLMs to Agentic Workflows: How Domain Intelligence Matures

Large Language Models (LLMs) have changed how we interact with information. They can summarise, explain, draft, and reason at a level that was unthinkable only a few years ago.

However, as organisations begin using AI in domain-specific, high-stakes contexts—regulation, law, finance, healthcare, or strategy—a critical question emerges:

When is an LLM enough, and when do you need something more structured?

This article explains the progression from simple LLM usage to fully agentic workflows, and why that evolution matters.

⸻

- The core problem: fluency is not reliability

LLMs are excellent at producing answers that sound correct.

That strength is also their weakness.

In general domains, this is acceptable. In regulated or expert domains, it is not.

Domain work requires:

1. Correct interpretation of formal rules

2. Clear jurisdictional boundaries

3. Traceability back to authoritative sources

4. Defensible reasoning under scrutiny

LLMs alone do not guarantee these properties.

⸻

- A maturity continuum, not a binary choice

AI systems used for domain intelligence tend to evolve along a continuum:

1. Basic LLM

General reasoning and language generation. Fast, flexible, but ungrounded.

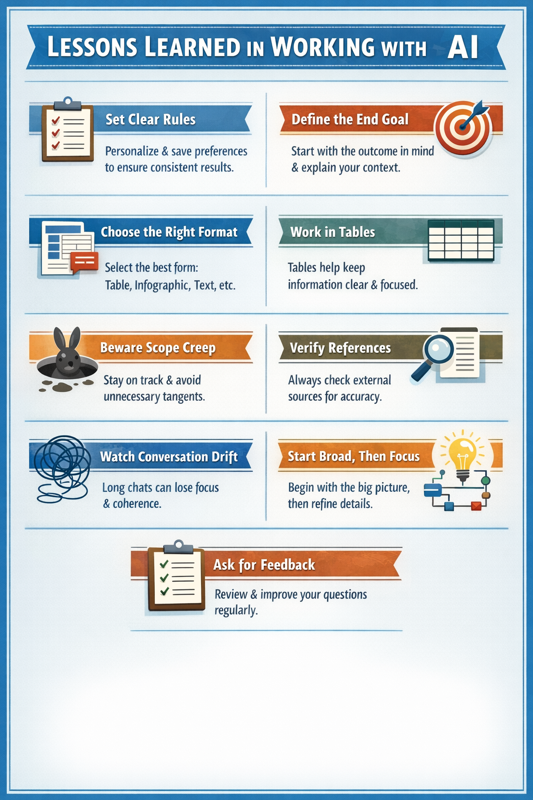

2. LLM + Prompt Discipline

Better consistency through structured prompts, still reliant on model recall.

3. LLM + RAG (Retrieval-Augmented Generation)

Answers are grounded in documents, policies, or knowledge bases.

4. Semi-Agentic Systems

Tools, checks, and limited validation steps are introduced.

5. Full Agentic Workflows

Explicit rules, verification steps, jurisdiction control, and failure handling.

Each step to the right adds control, reliability, and auditability.

Agentic workflows are not an alternative to LLMs; they are their governed, operational form.

⸻

- When does agentic become necessary?

The deciding factor is not technical sophistication.

It is risk.

Two questions determine the appropriate architecture:

1. What is the cost of being wrong?

2. Do I need to explain or defend the answer to someone else?

If both are low, a simple LLM is sufficient.

If either is high, relying on a single model becomes dangerous.

This is why agentic workflows appear first in:

• Regulatory analysis

• Legal reasoning

• Financial decision support

• Safety-critical or compliance-driven domains

In these contexts, confidence without justification is a liability.

⸻

- What makes an agentic workflow different?

An agentic workflow introduces elements that LLMs do not provide on their own:

1. Explicit rules

Formal constraints encoded outside the model.

2. Source authority

Clear prioritisation of documents, clauses, or standards.

3. Verification steps

Independent checks before answers are finalised.

4. Failure states

The system can stop, flag uncertainty, or request clarification.

5. Traceability

Every conclusion can be traced back to inputs and rules.

This transforms AI from a conversational assistant into a domain system.

⸻

- Why complexity can be justified

Agentic systems are more complex to build.

That complexity only creates value when it buys certainty.

In low-risk scenarios, complexity is wasteful.

In high-risk scenarios, simplicity is irresponsible.

The mistake many organisations make is treating all AI use cases as equal. They are not.

⸻

- A practical rule of thumb

- If an answer only needs to help you think, use an LLM.

- If an answer must stand up to scrutiny, use an agentic workflow.

⸻

- Final thought

The future of applied AI is not about choosing between LLMs and agents.

It is about placing LLMs inside systems that understand rules, risk, and responsibility.

That is how domain intelligence matures.

⸻