- why the main constraint in serious AI systems is usually the operating environment, not the model

- where AI initiatives fail in regulated and high-stakes settings

- what leaders should ask instead if they want usable, governable systems

The value of AI in regulated and high-stakes environments is not primarily in generation. It is in improving judgment.

That is the core point.

In serious operating environments, AI becomes useful when it helps people find the right information, frame the right decision, and move with more clarity under real constraints. It becomes dangerous when it is treated as a shortcut around operating discipline.

That is why the usual public conversation about AI still misses the point. It is too focused on the model and not focused enough on the surrounding system.

The Real Constraint Is Not the Model

Most AI commentary assumes the working environment is clean, reversible, and low-stakes.

It assumes the documents are usable, the metadata is coherent, the workflow is simple, and the consequences of error are limited. In that frame, the main question becomes whether the model is capable enough.

That is not how serious environments work.

Once the stakes rise, the limiting factor is rarely the raw model. The limiting factor is the system around it:

- the quality of the underlying documents

- the reliability of metadata and retrieval

- the fit with real operational workflows

- the level of governance and traceability required

- the ability to defend decisions under scrutiny

That is where many AI initiatives fail. The demo works. The operating system does not.

What AI Is Actually Good For

In regulated and high-accountability settings, AI is most useful when it strengthens five things.

1. Faster access to relevant information

In document-heavy environments, the first problem is often not analysis. It is access. Teams need to find the right material quickly, even when the information estate is large, fragmented, and inconsistently structured.

2. Better framing of the problem

AI can help surface patterns, summarize complexity, and expose gaps. That matters because many bad decisions start with a badly framed problem.

3. Stronger decision support

Used properly, AI can help compare options, clarify constraints, and support faster judgment without pretending to replace judgment.

4. Operational leverage

Well-designed AI systems can reduce friction in repetitive analysis, information triage, and early-stage interpretation. That is less glamorous than the market narrative, but much closer to where the practical value sits.

5. More usable systems under pressure

The real test is whether the system still works when the environment is messy, time is limited, and the decision matters.

Where Things Break

Most failures are not model failures. They are operating failures.

Documents are often low quality. Some are structured and usable. Others are scanned, inconsistent, or broken by poor formatting. Tables do not parse properly. Context gets split across appendices, attachments, and disconnected files.

Metadata is often weaker than teams believe. Many organizations think they have a searchable knowledge base when they actually have a large archive with unreliable naming, weak tagging, and inconsistent structure.

Workflow fit is another common failure point. A tool may perform well in isolation but fail once it has to sit inside real review, governance, or decision-making processes. If it cannot survive handoff, scrutiny, and audit pressure, it is not operationally useful.

Then there is governance. In regulated environments, traceability is not optional. Evidence matters. Decision logic matters. The ability to explain how a conclusion was reached matters. If AI increases speed but reduces defensibility, it weakens the system rather than improving it.

Why Regulated Environments Matter

Regulated environments reveal the truth about AI faster than most other settings.

That is one reason I find them so useful as a lens.

In MedTech, diagnostics, laboratory services, and other high-accountability contexts, the cost of sloppy thinking is higher. Decisions affect compliance, commercial risk, operational credibility, and sometimes patient pathways. Weak systems get exposed quickly.

That pressure forces better questions:

- Can the information be trusted?

- Can the process be repeated?

- Can the output be governed?

- Can the workflow survive real-world use, not just a pilot?

- Can the organization explain what it is doing if challenged?

These are not anti-AI questions. They are the questions that make AI useful.

The Operating System Matters More Than the Demo

The real opportunity is not in producing more impressive demos. It is in building systems where AI sits inside a disciplined operating structure.

That means:

- better document handling

- stronger metadata design

- clearer decision pathways

- tighter workflow integration

- appropriate governance and traceability

The model matters. But in serious environments it is rarely the decisive factor. The decisive factor is whether the surrounding operating system makes that intelligence usable, governable, and reliable.

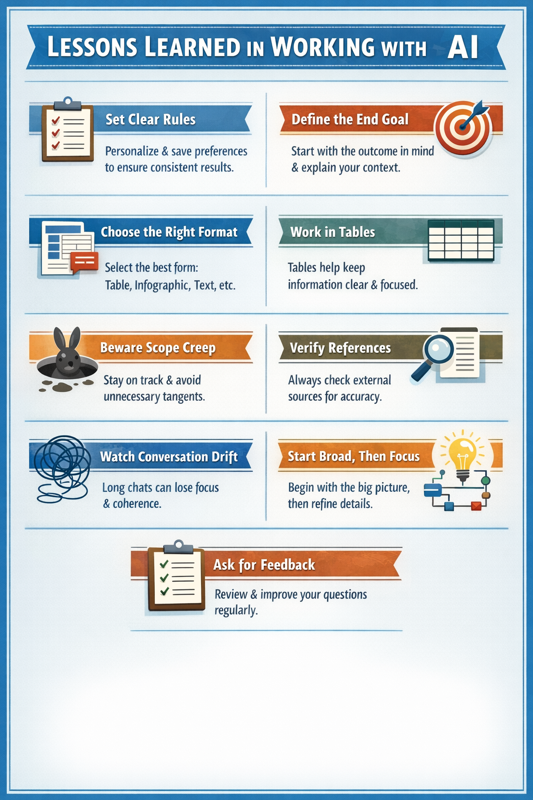

What Leaders Should Ask Instead

If you are evaluating AI for a regulated or high-stakes environment, the most useful questions are not the usual ones.

Not:

- which model is newest

- which demo looks most impressive

- which vendor promises the most automation

Instead:

- what decision-quality problem are we trying to improve?

- how good is the underlying information base?

- where do document quality or metadata failures break the workflow?

- what level of traceability do we need?

- what would make this system trustworthy in day-to-day use?

- where does human judgment remain essential?

Those questions are less marketable than the standard AI pitch. They are also much closer to the truth.

Closing

AI becomes more valuable as the consequences become clearer.

That is where weak systems fail and where well-designed systems create real leverage. In regulated and high-stakes environments, the future of AI will be decided less by novelty and more by whether the surrounding system is strong enough to make that intelligence useful under real pressure.

If you are working through that kind of challenge, explore the related essays or get in touch.

References and Frameworks

- Barbara Minto’s Pyramid Principle as a useful reference for top-down communication

- the practical distinction between model capability and operating-system strength

- regulated-environment design principles: traceability, defensibility, and workflow fit